Donate

Top Stories

Watch Live

The David Pakman Show

7:00 AM - 8:00 AM EST

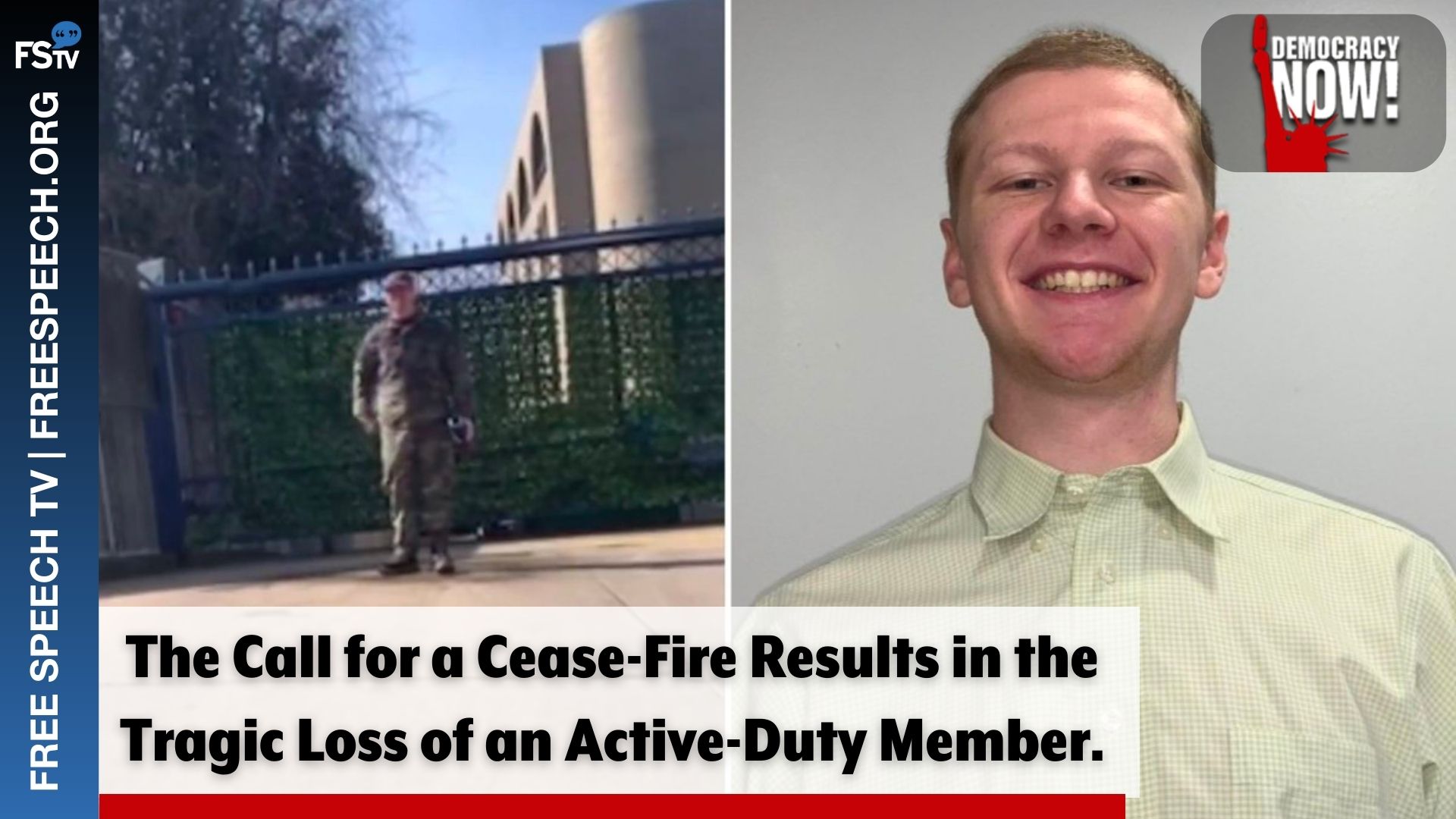

Democracy Now!

8:00 AM - 9:00 AM EST

The Stephanie Miller Show

9:00 AM - 12:00 PM EST

The Thom Hartmann Program

12:00 PM - 3:00 PM EST

The Randi Rhodes Show

3:00 PM - 4:00 PM EST